Localizing Firearm Carriers by Identifying Human-Object Pairs

Abstract:

Visual identification of gunmen in a crowd is a challenging problem, that requires resolving the association of a person with an object (firearm). We present a novel approach to address this problem, by defining human-object interaction (and non-interaction) bounding boxes. In a given image, human and firearms are separately detected. Each detected human is paired with each detected firearm, allowing us to create a paired bounding box that contains both object and the human.A network is trained to classify these paired-bounding-boxes into human carrying the identified firearm or not. Extensive experiments were performed to evaluate effectiveness of the algorithm, including exploiting full pose of the human, hand key-points, and their association with the firearm. The knowledge of spatially localized features is key to success of our method by using multi-size proposals with adaptive average pooling. We have also extended a previously firearm detection dataset, by adding more images and tagging in extended dataset the human-firearm pairs (including bounding boxes for firearms and gunmen). The experimental results (AP = 78.5) demonstrate effectiveness of the proposed method.

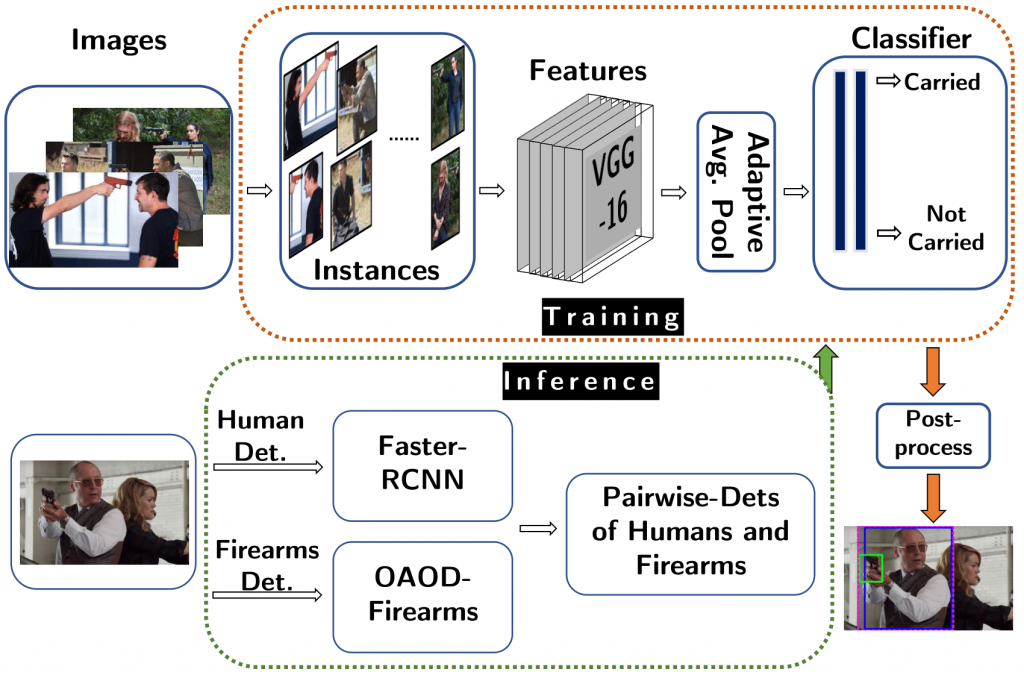

Overview of Proposed Approach:

Firearm carrier localization in an image is a challenging task, because of human pose variation, occlusion, clutter, sizes and shapes of firearm. We propose a novel approach to tackle this problem using multi-size human-object proposals. Human and Firearm bounding boxes are detected using OAOD and Faster RCNN. Image level human-object proposals are generated by extending each firearm box to each human box. Each of the proposals is passed through a classifier. The classifier is trained to predict association between firearm and human present in the proposal. It uses Adaptive Average Pooling to extract features from multi-size input. As for post-processing, instance-based classification results are mapped back to the image for better visualization.

Dataset:

Dataset will be added soon.

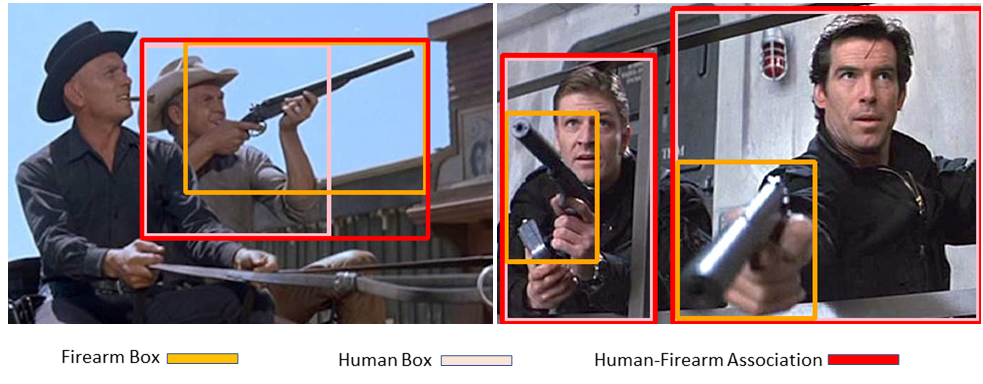

Sample images from the dataset are shown in figure below. For each image only the instances which contain a true association (carried firearm) are highlighted in the figure.

Results:

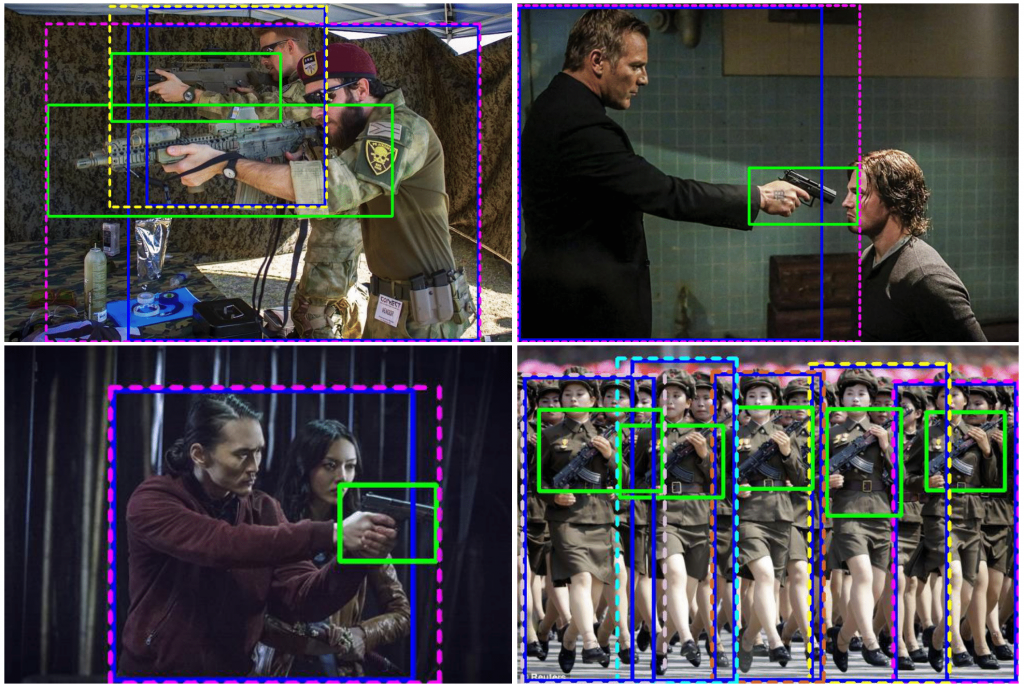

Results of firearm carrier localization are shown in the figure below. The dotted boxes in the figure shows detected firearm carriers, whereas the solid boxes indicate the human and firearm which have an association. Different colors are used to indicate different firearm carriers in the same image. Green Boxes are used for firearms, and blue boxes are used for human.

Links:

Links:

arXiv (Paper: Localizing Firearm Carriers by Identifying Human-Object Pairs)

Github (Link will be added soon)

Keywords:

Human-Firearm Interaction, Paired Human-Firearm boxes, Localizing Firearm Carriers, Human-Object Interaction, Firearm Carrier Detection, Firearm Carrier Localization, Deep Learning, Computer Vision

BIBTEX:

@article{basit2020localizing,

title={Localizing Firearm Carriers by Identifying Human-Object Pairs},

author={Abdul Basit, Muhammad Akhtar Munir, Mohsen Ali and Arif Mahmood},

year={2020},

eprint={2005.09329},

archivePrefix={arXiv},

primaryClass={cs.CV},

url = {http://arxiv.org/abs/2005.09329}

}